Forget the chatbot wars. The real fight in 2026 isn't about which AI can write you a better cover letter it's about which lab controls the operating system layer of artificial intelligence itself: the sovereign reasoning stack that sits beneath every enterprise workflow, every agentic coding run, every edge device, and every national security apparatus on the planet. The prize isn't a subscription. It's infrastructural dominance over the post-AGI economy.

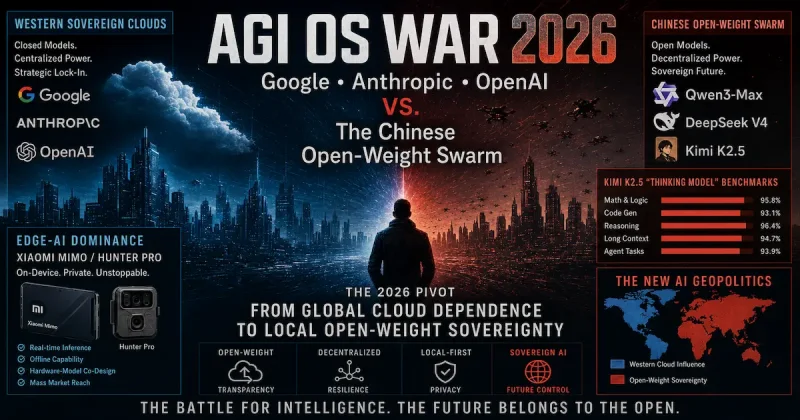

To understand how we got here, you need to accept one uncomfortable truth: the AI race of 2023–2024 the one measured in chatbot response quality and DALL-E image aesthetics is already dead. What replaced it is something far more consequential, far more geopolitically loaded, and almost completely misunderstood by mainstream technology coverage. As analyst Salvador Vilalta documented in April 2026, the AGI race has split into two parallel wars operating on different continents, with different economics, different licensing philosophies, and radically different endgame visions.

From "Model as Product" to "Model as Operating System"

The watershed moment came not with a single model release, but with the cumulative realization hitting CIOs, defense ministries, and sovereign wealth funds simultaneously in late 2025 that dependency on a single cloud provider's frontier model was a geopolitical liability of the first order. Every ChatGPT Enterprise call routed through Microsoft Azure was a potential choke point. Every Claude API ping was subject to Anthropic's acceptable use policies, U.S. export controls, and the vagaries of a $840 billion valuation that could evaporate. The world had built its cognitive infrastructure on rented land.

The shift that followed was structural, not cosmetic. The new paradigm isn't a model you query it's a "Thinking Model OS": a full-stack reasoning system that combines a frontier-grade language model with persistent agentic memory, tool-use orchestration, multimodal perception, and the ability to run locally on sovereign hardware, free from any external API dependency. The model isn't the product. The model is the kernel. Everything else the enterprise SaaS layer, the edge deployment, the national AI infrastructure runs on top of it.

This reframing has three enormous consequences. First, benchmark leaderboards stopped being about single-task accuracy and started being about agentic throughput: how many real-world software engineering tasks, legal research loops, or autonomous coding agent sessions a model can complete end-to-end without human intervention. Second, the licensing question open-weight vs. proprietary transformed from a philosophical debate into a strategic sovereignty calculation. Third, the geography of the war shifted: Western "Sovereign Cloud" architectures collided head-on with China's open-weight swarm strategy.

The Three-vs-Many Architecture of the 2026 AI War

On the Western side, the field has narrowed dramatically. The five-lab map that analysts used in 2024 has collapsed to three credible frontier players: OpenAI, Anthropic, and Google DeepMind. Meta's Llama strategy has been strategically outflanked the very open-source space it pioneered is now owned by Alibaba's Qwen and DeepSeek. xAI's Grok remains a consumer product tethered to a social media platform, not an enterprise OS contender. What remains is a trio of Western labs each executing a distinct "OS capture" strategy:

- OpenAI is pursuing consumer ubiquity at scale ChatGPT embedded in every interface, a $730 billion pre-money valuation, and a Pentagon relationship that frames AI access as a national security asset.

- Anthropic is positioning itself as the trusted defensive infrastructure layer Project Glasswing united Apple, Google, Microsoft, AWS, and NVIDIA under a single cybersecurity coalition, with Anthropic's Claude Mythos as the connective tissue.

- Google DeepMind is playing the distribution game Gemini 3 embedded in Search, Workspace, Android, and Chrome, with YouTube as the largest proprietary training dataset in human history and Sergey Brin back running day-to-day operations.

All three share a common architectural assumption: the intelligence lives in the cloud, the value accrues to the platform, and sovereignty is managed through contractual guarantees rather than local compute. This is the "Sovereign Cloud" thesis and it is precisely what China's open-weight swarm has spent two years systematically dismantling.

China's Open-Weight Swarm: The Geopolitical Weapon Hidden in MIT Licenses

The Chinese strategy is structurally different and, in many respects, more sophisticated. Rather than concentrating frontier capability in a single closed platform, Beijing's AI ecosystem through deliberate coordination between Alibaba, DeepSeek, Moonshot AI, Zhipu, ByteDance, and MiniMax has weaponized open-weight model releases as a geopolitical market-capture mechanism.

The numbers are staggering. Chinese models crossed 45% of total model traffic on OpenRouter in April 2026, with aggregate weekly volume from the major Chinese labs matching the combined usage of the top three Western providers at prices six to ten times lower. This isn't accidental disruption. It is a deliberate commoditization strategy: drive model inference costs toward zero, flood the Global South and price-sensitive enterprise market with permissively licensed weights, and establish architectural lock-in at the infrastructure level before Western labs can respond.

The mechanism is elegant. When DeepSeek releases V4 under MIT a 1.6 trillion parameter MoE model with a 1,000,000-token context window any organization in the world can download it, fine-tune it on sovereign hardware, and operate it entirely outside U.S. jurisdiction. No API calls to Amazon Web Services. No compliance with OpenAI's terms of service. No vulnerability to export control policy changes in Washington. The "Thinking Model OS" runs locally, and the operating system vendor is effectively anonymous, decentralized, and unfireable.

| Strategic Axis | Western Sovereign Cloud | Chinese Open-Weight Swarm |

|---|---|---|

| Primary Labs | OpenAI, Anthropic, Google DeepMind | DeepSeek, Alibaba Qwen, Moonshot (Kimi), Zhipu (GLM), ByteDance, MiniMax |

| Model Access Model | Hosted API, enterprise contracts, usage-based billing | Open weights (MIT / Modified MIT / Apache 2.0) + hosted API option |

| Sovereignty Promise | Contractual SLAs, data residency guarantees, compliance certifications | Local compute, no external API dependency, full weight ownership |

| Pricing Posture | Premium-tier, margin-positive API pricing | Aggressive cost destruction DeepSeek V4-Flash at $0.28/M output tokens |

| "Thinking Model" Flagship | Claude Opus 4.7 (Anthropic), GPT-5.5 (OpenAI), Gemini 3.1 Pro (Google) | Kimi K2.5/K2.6 (Moonshot), DeepSeek V4-Pro, GLM-5.1, Qwen 3.6-Max |

| Agentic Architecture | Task budgets, tool orchestration, enterprise agent frameworks | Agent Swarm (Kimi), MoE parallel execution, long-context agentic loops |

| Edge Deployment | Limited; primarily cloud-first architectures | Aggressive Xiaomi Mimo/Hunter Pro, device-native inference |

| Geopolitical Endgame | Platform lock-in via ecosystem integration and premium features | Architectural dependency via open-weight ubiquity and price dominance |

Why "Thinking" Is the New Battleground Metric

The technical term "Thinking Model" deserves precision, because it has become the decisive benchmark category in the 2026 OS war. A Thinking Model is not simply a large language model it is a system capable of extended chain-of-thought reasoning, self-verification, interleaved tool use, and multi-step problem decomposition across a long context window, operating autonomously over minutes or hours rather than responding in seconds.

The distinction matters enormously for the OS thesis. A chatbot answers questions. A Thinking Model OS solves problems writing and debugging entire software codebases, conducting multi-hour legal research loops, orchestrating agent swarms that decompose complex tasks into parallel sub-tasks executed by dynamically instantiated domain-specific agents. This is precisely the architecture that Moonshot AI's Kimi K2.5 introduced in January 2026, with its 1-trillion-parameter MoE architecture, 384 experts, 256K context window, native multimodality, and what Moonshot calls "Agent Swarm" a self-directed, coordinated swarm-like execution scheme where K2.5 decomposes complex tasks into parallel sub-tasks executed by dynamically instantiated agents.

The benchmark that most clearly captures this distinction is not MMLU-Pro or GPQA-Diamond both of which measure static knowledge retrieval. The decisive benchmarks of 2026 are SWE-Bench Pro (real-world software engineering on a full codebase), Terminal-Bench 2.0 (autonomous terminal operation), and HLE-Full with tools (Humanity's Last Exam with live tool access) all of which measure sustained agentic reasoning under real-world conditions. These are operating system metrics, not chatbot metrics.

And on those metrics, the Chinese open-weight swarm is not trailing the Western frontier by a comfortable margin. It is, in several cases, leading it. Kimi K2.6 posts 54.0 on HLE-Full with tools, outperforming GPT-5.4 (52.1), Claude Opus 4.6 (53.0), and Gemini 3.1 Pro (51.4) on that benchmark. Zhipu's GLM-5.1 posts 92.7% on AIME 2026 and 86.0% on GPQA-Diamond. DeepSeek V4-Pro claims 80.6% on SWE-Bench Verified. These are not "good for open-source" numbers. These are frontier numbers available for free download, deployable on sovereign hardware, unreachable by export control or API throttling.

The 2026 Pivot: From API Dependency to Local Sovereignty

The geopolitical pressure crystallizing this shift is unmistakable. Italy's data protection authority restricted DeepSeek in early 2025. Multiple U.S. states moved to limit Chinese model use on government devices. The White House formally accused Chinese actors of running industrial-scale distillation campaigns against U.S. frontier models essentially, systematic intellectual property extraction at algorithmic scale. The response from governments and large enterprises in the Global South and in Europe was not to pick a side. It was to ask a different question entirely: what does it mean to run AI on infrastructure we actually control?

The answer to that question delivered simultaneously by CIOs in Jakarta, Riyadh, São Paulo, and Warsaw is the 2026 pivot from global cloud dependence to local open-weight sovereignty. It is not ideological. It is actuarial: the risk calculus of depending on either Washington's or Beijing's cloud infrastructure has become unacceptable for any organization managing sensitive data, critical infrastructure, or national security workloads. The Thinking Model OS that runs on your own hardware, on your own network, under your own legal jurisdiction, using weights you downloaded and own that is the product the market is actually buying in 2026.

This is the terrain on which the AGI OS War is being fought. Not in benchmark press releases. Not in consumer app store rankings. In the quiet, consequential decisions of procurement officers, defense ministries, and sovereign AI councils choosing which weights to run, on which chips, under which legal framework and whether the answer to that question will ever route through a Western cloud provider's billing department again.

2) Western Sovereign Clouds: Google, Anthropic, and OpenAI's Strategy

The first thing to understand about the Western sovereign cloud strategy is that it is not a single strategy. It is three distinct bets made by three radically different organizations, with three different theories of how AGI gets deployed, monetized, and controlled converging on the same operational conclusion: the company that controls the infrastructure layer beneath the model controls everything. The model is the product. The cloud is the moat. And in 2026, the moat is being fortified not just with capital and compute, but with policy, chip architecture, cryptographic identity, and enterprise contracts so deeply embedded in organizational workflows that switching becomes structurally impossible.

Understanding this requires stepping back from the benchmark noise and examining what Salvador Vilalta's April 2026 analysis correctly identified: the AGI race in the West has consolidated from five contenders to three. OpenAI, Anthropic, and Google DeepMind are no longer simply model labs competing on MMLU scores. They are constructing vertically integrated AGI operating systems each with a proprietary compute tier, a regulatory positioning strategy, an enterprise distribution network, and an increasingly explicit political identity. The question of which model is smarter has become secondary to the question of which infrastructure you can be legally, politically, and contractually permitted to run.

The Strategic Architecture: Three Pillars of Western Cloud Control

Western sovereign cloud strategy, as practiced by all three remaining frontier labs, rests on four interlocking pillars that together create a system far stickier than any individual model's performance advantage. These are: policy capture (embedding AI labs into the regulatory and national security apparatus of Western governments), compute sovereignty (controlling the chip supply chain from training cluster to edge inference), cryptographic identity (building verified-model attestation and data provenance into the deployment stack), and enterprise lock-in (integrating so deeply into existing SaaS workflows that the cost of migration exceeds any open-weight alternative's price advantage). Each of the three labs executes these pillars differently, with different leverage points and different risk profiles.

| Strategic Pillar | OpenAI / Microsoft | Anthropic / Amazon | Google DeepMind |

|---|---|---|---|

| Policy Capture | Pentagon AI contract (Feb 2026); White House advisory access; NIST AI Safety Institute collaborator | Project Glasswing (45+ org defensive coalition); UK AI Safety Institute founding partner; EU AI Act early compliance | NATO AI advisory; EC DG Connect working groups; GCHQ model evaluation partnerships |

| Compute Sovereignty | Azure AI infrastructure; $110B round includes Nvidia/SoftBank; Stargate data center build | AWS Trainium/Inferentia native; $100M Project Glasswing usage credits; Anthropic-specific inference clusters | TPU v6 (Trillium) internal silicon; Google Cloud as primary inference; YouTube training data moat |

| Cryptographic Identity | Azure Confidential Compute; model provenance via Microsoft Entra attestation | Constitutional AI audit logs; Model cards with safety certifications; Mythos Preview restricted key issuance | Vertex AI provenance tracking; Google Workspace identity layer; Android Trust APIs |

| Enterprise Lock-In | ChatGPT Enterprise; Microsoft 365 Copilot; GitHub Copilot in dev workflows | Claude for Business; Bedrock API; Claude Design workflow integration | Gemini in Workspace; Chrome/Android distribution; Search integration at 4B+ user scale |

OpenAI and the $840 Billion Bet on Consumer-to-Government Saturation

OpenAI's sovereign cloud theory is the most aggressive and the most legible. Having closed a $110 billion funding round with Amazon, Nvidia, and SoftBank at a post-money valuation of $840 billion, Sam Altman has made a single, enormous bet: that the company with the largest consumer installation base ChatGPT's hundreds of millions of monthly active users will inevitably translate that consumer penetration into enterprise and eventually government adoption through sheer gravitational force. The logic is Microsoftian in its DNA, which is not coincidental given Microsoft's foundational stake in the company's Azure infrastructure.

The Pentagon AI contract announced in late February 2026 was the operational expression of this thesis. By embedding ChatGPT-class models into U.S. defense department workflows however controversially OpenAI established a template: consumer ubiquity creates the political and bureaucratic familiarity that makes government procurement feel safe rather than experimental. The model a defense analyst uses at home on Saturday evening becomes the model that passes the CIO's vendor evaluation on Monday morning. This is not an accident. It is the most sophisticated marketing funnel in the history of enterprise software, and it runs on $840 billion of capital backstop.

The Stargate data center initiative OpenAI's joint venture with SoftBank, Oracle, and the U.S. government as implicit infrastructure partner is the physical expression of sovereign cloud ambition. Stargate is not just a training cluster. It is a claim on the geographic and legal soil of American AI infrastructure: a declaration that the compute substrate for the world's most used AI system will be physically resident in the United States, subject to U.S. law, auditable by U.S. regulatory bodies, and inaccessible to foreign adversaries. For European and Global South enterprise customers evaluating whether to route their cognitive workloads through OpenAI's APIs, Stargate's geographic concentration is simultaneously the product's strongest security argument and its most significant sovereignty liability.

Anthropic's Platform Pivot: From Model Lab to Defensive Cognitive Infrastructure

Anthropic's sovereign cloud strategy is categorically different from OpenAI's and, arguably, more durable. Where OpenAI bets on consumer saturation and OpenAI bets on distribution volume, Anthropic has identified a more defensible position: trust infrastructure. The company is repositioning itself not as the maker of the best model, but as the maker of the most certifiable model the one that large enterprises, regulated industries, and Western governments can deploy with documented safety guarantees, auditable behavioral boundaries, and verifiable alignment properties that no open-weight Chinese alternative can credibly match without surrendering the regulatory compliance argument entirely.

Project Glasswing Anthropic's defensive coalition announced in April 2026, uniting AWS, Apple, Google, Microsoft, Nvidia, Cisco, CrowdStrike, JPMorgan Chase, the Linux Foundation, Palo Alto Networks, and Broadcom around its Claude Mythos Preview model's cybersecurity capabilities is the most consequential strategic move any Western lab has made in 2026. The structure of the play is architecturally elegant: Anthropic has positioned Claude not as a competitor to Azure, AWS, or Google Cloud, but as the common security substrate that all three require. It does not compete with Microsoft's enterprise stack. It protects it. This gives Anthropic a cross-platform trust relationship that no hyperscaler can replicate, because replication would require a hyperscaler to endorse a competitor's security infrastructure a political impossibility.

The $100 million in Glasswing usage credits is not charity. It is a calculated seeding of Claude's APIs into the security workflows of 45 of the world's most critical digital infrastructure organizations, creating technical dependencies that will persist for years regardless of which frontier model wins next quarter's benchmark competition. When JPMorgan Chase's security operations center runs vulnerability detection on Claude Mythos, they are not evaluating Anthropic. They are onboarding Anthropic into their compliance stack a relationship that generates switching costs measured not in dollars but in regulatory re-certification cycles.

The simultaneous launch of Claude Design and the Claude Opus 4.7 update with task budget controls for agentic loops represents the second vector of Anthropic's lock-in strategy: workflow integration. Claude Design Anthropic's first consumer-facing product built on top of its model rather than alongside it is a deliberate move into the product layer that the company had consciously avoided for two years. The strategic logic is clear: if Chinese models are commoditizing the raw intelligence layer by offering comparable reasoning at one-sixth the cost, the only defensible margin sits in the product experience wrapped around that intelligence. Task budgets for agentic loops in Claude Opus 4.7 serve the same function at the developer layer: they make Claude the default runtime for complex multi-step workflows, embedding Anthropic's billing relationship into the critical path of enterprise automation pipelines.

Google DeepMind: The Long Game of Distributed Intelligence

Of the three Western survivors, Google DeepMind plays the most patient game and the one most likely to win on a ten-year horizon regardless of which company dominates the next six months of benchmark cycles. Google's sovereign cloud advantage is not primarily about model quality. It is about the most profound distribution asymmetry in the history of technology: four billion Android devices, two billion Gmail accounts, a billion Chrome browsers, and the world's dominant search engine all now running Gemini 3 inference at varying capability tiers, all generating behavioral training signal, all representing embedded access points for enterprise and government deployment that no competitor can replicate without building an entirely parallel internet.

Sergey Brin's return to active day-to-day operations his most substantive engagement with Google since 2019, as Vilalta's analysis noted signals that Google's leadership has internally concluded that the Gemini era is the most important strategic moment in the company's history since the original PageRank algorithm. The pace of Gemini releases in 2026 has accelerated markedly: Gemini 3.1 Flash Lite Preview, Gemini 3.1 Pro Preview, and a cascade of Workspace integrations represent a deliberate strategy of platform saturation ensuring that every enterprise workflow touching Google's ecosystem encounters Gemini before it encounters Claude or GPT.

The YouTube training data moat deserves particular attention as a sovereign cloud asset, because it is the one Western advantage that is genuinely irreproducible. No Chinese lab regardless of chip access, algorithmic innovation, or government subsidy can legally train on YouTube's multimodal corpus. That corpus represents the world's largest collection of human expert knowledge conveyed in video form: surgical procedures, engineering tutorials, legal arguments, scientific lectures, musical performances, and the entire spectrum of human craft knowledge captured in moving images and synchronized audio. The model that trains on this data has access to a form of embodied, procedural human knowledge that text corpora alone cannot replicate. For enterprise customers in healthcare, legal, engineering, and creative industries, this training advantage translates into capability gaps that pure benchmark scores systematically fail to capture.

Google's TPU silicon strategy the Trillium v6 architecture running Gemini inference at a cost per token that no third-party cloud customer can access creates a structural pricing advantage that compounds over time. When Google prices Gemini in Google Workspace, it is not pricing a standalone API call. It is bundling inference cost into a productivity suite that enterprises are already paying for, effectively making Gemini free at the margin for the hundreds of millions of organizations that live in Google's productivity ecosystem. This is the most effective enterprise lock-in mechanism in the industry, because it removes the procurement decision entirely: Gemini is already there, already running, already billing through an existing vendor relationship that IT departments have no incentive to audit for AI-specific line items.

The Policy Dimension: Export Controls, Distillation Attacks, and the Regulatory Moat

The most underreported dimension of Western sovereign cloud strategy is the degree to which it depends on non-market mechanisms specifically, on the regulatory and export control apparatus of the U.S. government to maintain competitive distance from Chinese alternatives. This is not a secondary consideration. It is, for the near term, the primary mechanism by which the Council on Foreign Relations' senior fellows estimate the U.S. maintains approximately a seven-month lead over Chinese frontier models a lead that exists not because American researchers are seven months more intelligent than Chinese researchers, but because Nvidia Blackwell GPUs banned from export to China represent seven months of training compute that Chinese labs cannot legally access at scale.

The distillation attack dimension adds a further layer of geopolitical complexity to the Western sovereign cloud equation. Anthropic, Google, and OpenAI have all formally accused DeepSeek of conducting industrial-scale distillation attacks against their frontier models creating fake API accounts, running millions of synthetic interactions, and using the resulting outputs to distill capabilities into Chinese open-weight models at a fraction of the authentic R&D cost. The White House formally accused Chinese entities of these extraction campaigns in April 2026. For Western sovereign cloud customers, this accusation serves a dual function: it is both a genuine security concern and a marketing narrative that reinforces the value of accessing models through authenticated, monitored, enterprise API channels rather than through open-weight downloads that carry no chain-of-custody guarantees.

The regulatory moat is real but fragile. CFR Senior Fellow Jessica Brandt's assessment identifies the core vulnerability: Chinese labs currently face few meaningful consequences for distillation attacks, and the proposed countermeasures sanctions, Entity List designations, multilateral pressure require sustained diplomatic coordination that the Trump administration's unilateral posture makes structurally difficult. If the regulatory moat erodes through chip smuggling networks, through third-country data center access, or through algorithmic innovation that reduces the compute gap the Western labs lose the one advantage that cannot be replicated through engineering talent or capital deployment alone.

The Enterprise Lock-In Mechanics: Why Migration Costs Exceed Price Differentials

The most durable component of Western sovereign cloud strategy is not the model, not the chip, and not the policy. It is the enterprise integration depth the degree to which Western labs have embedded their inference APIs into the critical path of organizational workflows in ways that create switching costs measured not in dollars but in organizational trauma. Understanding this dynamic requires examining the actual mechanics of how large enterprises adopt AI infrastructure in 2026, and why the price advantages of Chinese open-weight models systematically fail to translate into enterprise adoption at the rate that raw economics would predict.

| Lock-In Category | Mechanism | Switching Cost Estimate | Primary Beneficiary |

|---|---|---|---|

| Compliance Certification | SOC 2 Type II, HIPAA BAA, FedRAMP Authorization tied to specific model versions | 12–24 months re-certification per regulatory body | Anthropic (Constitutional AI audit trail), OpenAI (FedRAMP progress) |

| Workflow Embedding | Prompt engineering, fine-tuning, agent pipelines built against specific API response formats | 3–9 months engineering re-work per major pipeline | OpenAI (ChatGPT Enterprise), Google (Workspace Gemini) |

| Identity Integration | Single Sign-On, role-based access control, audit logging tied to existing IdP infrastructure | 6–18 months IT security review and migration | Microsoft (Entra + Copilot), Google (Workspace identity layer) |

| Data Residency Contracts | DPA agreements, data processing addenda specifying geographic storage and processing jurisdiction | Legal renegotiation plus jurisdictional risk re-assessment | All three Western labs (Chinese alternatives carry Chinese law exposure) |

| Training Data Provenance | Fine-tuned model weights incorporating proprietary enterprise data, hosted on vendor infrastructure | Proprietary data recovery, potential IP dispute on derived weights | Anthropic (Constitutional fine-tuning), OpenAI (GPT-5.x fine-tuning API) |

The compliance certification row in that table deserves particular emphasis, because it represents the lock-in mechanism most systematically underweighted in the popular analysis of Western versus Chinese AI economics. When a European bank deploys Claude Opus 4.6 in a GDPR-compliant configuration with a signed Data Processing Agreement, an EU Standard Contractual Clause, and a third-party audit trail of model outputs for credit decision transparency, it has not merely purchased an API subscription. It has embedded Anthropic into its regulatory compliance architecture. Replacing that with a Qwen 3.6-Max-Preview API or a self-hosted DeepSeek V4 does not require writing a check to a different vendor. It requires a 12-to-24-month re-certification cycle with financial regulators, a new legal opinion on the adequacy of Chinese data processing jurisdiction, and a board-level risk sign-off on the geopolitical exposure of routing customer financial data through Alibaba Cloud's inference clusters. At that point, DeepSeek's 6-to-10x price advantage disappears entirely not because it isn't real, but because the CIO's switching cost calculation never reaches the line item where price appears.

The Achilles' Heel: What Western Sovereign Cloud Strategy Cannot Fix

The Western sovereign cloud strategy, for all its sophistication, carries structural vulnerabilities that no amount of policy capture, enterprise embedding, or regulatory moat-building can resolve without fundamental changes to the global AI economy. The first and most acute is the price problem. As Vilalta's analysis of OpenRouter traffic data documented, Chinese models crossed 45% of global model traffic in April 2026 driven primarily by price points 6 to 10 times below Western equivalents. That traffic is not coming from regulated European banks or U.S. defense contractors. It is coming from the startup ecosystem, the developer community, the academic research infrastructure, and the Global South enterprise market the constituencies that will build the next generation of AI-native applications, establish the next round of de facto API standards, and train the next cohort of AI engineers whose architectural intuitions are formed by whichever API surface they learn on first.

The second vulnerability is geographic. Western sovereign cloud infrastructure is, by design, concentrated in Western jurisdictions. For the 140-plus countries that are neither NATO members nor Five Eyes partners, routing cognitive infrastructure through an American cloud provider is not a neutral technical decision. It is a geopolitical alignment choice, with practical consequences for data sovereignty, surveillance exposure, and the domestic political legitimacy of government AI deployments. The Chinese open-weight strategy specifically the MIT and Apache 2.0 licensing of models like DeepSeek V4 and Qwen 3.6-35B-A3B offers these governments an alternative that Western sovereign cloud, by its very nature as sovereign cloud, cannot: the ability to run a frontier-class AI system on domestic hardware, under domestic law, with no billing relationship to any foreign infrastructure provider.

The third vulnerability perhaps the most consequential is the consolidation paradox at the heart of the Western strategy itself. By contracting from five labs to three, the Western AI ecosystem has concentrated its frontier capability into a set of organizations whose combined dependency on continued U.S. government goodwill, Nvidia chip supply, and American capital markets creates a single-point-of-failure risk profile that no amount of internal competition between OpenAI, Anthropic, and Google can fully hedge. If Nvidia's next-generation chip architecture is delayed by supply chain disruption. If the U.S. Congress passes AI liability legislation that imposes training compute restrictions. If a single catastrophic model failure in a government deployment triggers a political backlash that freezes enterprise adoption for eighteen months in any of these scenarios, the three Western labs' sovereign cloud moats deepen simultaneously into sovereign cloud prisons: infrastructure too politically sensitive to operate freely and too capital-intensive to pivot rapidly.

This is the paradox that the Chinese open-weight swarm does not face. A distributed ecosystem of six or more frontier-capable labs, releasing weights under permissive licenses, running on domestically produced Huawei Ascend silicon, and optimizing for price and accessibility rather than margin and certification that ecosystem does not have a single throat to choke. It has dozens. And as the next section of this analysis demonstrates, the Thinking Model benchmark arms race led by Kimi K2.5 suggests that the performance gap which currently justifies Western sovereign cloud's price premium is narrowing faster than anticipated, forcing a fundamental recalculation of enterprise strategy.

3) China's Open-Weight Swarm: Qwen3-Max + DeepSeek V4 as the Backbone of a Rapidly Iterating Local Ecosystem

On April 24, 2026, something happened that the Western AI industry had been quietly dreading for eighteen months: three major Chinese labs released new frontier-capable models within hours of each other. DeepSeek dropped DeepSeek V4 under a full MIT license. Moonshot AI released Kimi K2.6 under a Modified MIT license. Alibaba pushed a new open-weight tier of Qwen 3.6. In the space of a single business day, the open-weight frontier moved three steps forward simultaneously. As Stanford geopolitics researcher Graham Webster noted in his Substack Here It Comes, "rumors of the death of Chinese open-weight LLM advancement have been greatly exaggerated." That is, if anything, an understatement.

To understand why this coordinated if not formally orchestrated release cadence matters strategically, you have to understand what it is not. It is not a single company releasing a product. It is not a startup chasing benchmark glory. It is the visible output of what analyst Salvador Vilalta has described as a second, parallel AI race one where "the Chinese share of model traffic on OpenRouter crossed 45% in April" 2026, where aggregate weekly volume from Alibaba, DeepSeek, ByteDance, Zhipu, StepFun, and MiniMax exceeds that of the three Western giants combined on certain infrastructure layers, and where the price differential between Chinese and Western equivalent models runs between six and ten times. That is not a race China is about to win. It is a race China is already running in a different stadium, under different rules, with a different definition of what winning looks like.

The Two Load-Bearing Pillars: Qwen3-Max and DeepSeek V4

Within the swarm, two models function as the structural backbone around which the rest of the ecosystem organizes itself. The first is Alibaba's Qwen3-Max family specifically the Qwen3.6-Max-Preview released April 21, 2026, which topped six major coding benchmarks and posted measurable gains in world knowledge and instruction-following over its predecessor Qwen3.6-Plus. The second is DeepSeek V4, released April 23–24, 2026, in two open-weight MoE tiers: V4-Flash (284 billion total parameters, 13 billion active) and V4-Pro (1.6 trillion total parameters, 49 billion active), both shipping with a one-million-token context window a step-change from V3.2's 128K ceiling.

These two models matter not merely because of their benchmark scores though those scores are significant but because of the structural roles they play in enabling the rest of the swarm. Qwen's Apache 2.0 open-weight tiers (the Qwen3.6-35B-A3B in particular) provide the most commercially permissive foundation in the ecosystem: any developer, anywhere, can fine-tune, redistribute, and deploy without royalty negotiation. DeepSeek V4's MIT license and aggressively subsidized API pricing V4-Flash at $0.0028 cache-hit / $0.14 cache-miss / $0.28 output per million tokens make it the de facto cost floor against which every other model in the world, Western or Chinese, must now justify its pricing.

The CFR's Michael Horowitz framed the strategic implication precisely: "Second-best models carry enormous competitive value when they are cheap and open, which makes them easy to widely diffuse." V4 is priced for mass deployment at least four times cheaper than American competitors. When it comes to converting AI technology into global economic power, accessible tools beat marginally superior tools at scale and DeepSeek V4 is, by this metric, the most dangerous model in the world regardless of whether it trails GPT-5.4 on three specific benchmark subcategories.

Anatomy of the Swarm: Five Labs, One Coordinated Effect

The genius of the Chinese open-weight strategy and whether it is the product of deliberate coordination or emergent competitive dynamics is, for practical purposes, irrelevant is that no single lab needs to beat OpenAI. The labs collectively need to make Western closed-source infrastructure unnecessary. That is a fundamentally different and considerably easier strategic target, and the five-lab structure of the swarm is purpose-built for it.

| Lab | Lead Model (Apr 2026) | Architecture | License | Swarm Role | API I/O (per 1M tokens) |

|---|---|---|---|---|---|

| DeepSeek | V4-Flash / V4-Pro | MoE 284B/13B active; 1.6T/49B active | MIT | Cost floor; global adoption engine; inference on Huawei Ascend | $0.14 / $0.28 (Flash); $0.435 / $0.87 promo (Pro) |

| Alibaba (Qwen) | Qwen3.6-Max-Preview / 35B-A3B | MoE 35B/3B active (open tier); proprietary frontier | Apache 2.0 (open tiers); Proprietary (Max) | Broadest family; most permissive license; benchmark leadership in coding | Self-host (open); hosted API (Max) |

| Moonshot AI (Kimi) | Kimi K2.6 | MoE 1T total / 32B active; 384 experts; 256K context | Modified MIT | Agentic coding specialist; multi-hour autonomous runs; swarm orchestration | $0.60 / $4.00 |

| Zhipu AI (Z.ai) | GLM-5 / GLM-5.1 | 745B total; 256 experts; ~44B active; 202K context; Huawei Ascend native | MIT | Reasoning & factual reliability; sovereign silicon proof-of-concept | ~$0.60 / ~$2.20 |

| MiniMax | M2.5 | 230B MoE; up to 1M context | Modified MIT | Cheapest agentic coding API; text-only pipeline specialist | $0.30 / $1.20 (Standard) |

Each lab fills a distinct niche. DeepSeek provides the cost floor and the global adoption surface. Qwen provides the permissive licensing that makes enterprise adoption legally unambiguous. Kimi provides the agentic infrastructure that long-running autonomous coding workflows demand. GLM-5 provides the reasoning depth and, crucially, the geopolitical proof-of-concept: a 745-billion-parameter model trained entirely on 100,000 Huawei Ascend 910B chips, with no Nvidia GPUs in the training stack, achieving 92.7% on AIME 2026 and 86.0% on GPQA-Diamond. MiniMax provides the cheapest per-token pricing for agent orchestration workloads, at $0.30 input per million tokens. Together, these five labs offer a complete, modular, interchangeable infrastructure stack that no single Western lab can replicate not because any one Chinese model is superior, but because the diversity of the swarm eliminates the single point of failure that plagues the Western three-lab oligopoly.

The Open-Source Lever as Geopolitical Strategy

The Western commentary class persistently misreads the Chinese open-weight strategy as commercial altruism or competitive desperation labs releasing weights for free because they cannot monetize them otherwise. The reality is more sophisticated and more dangerous. Open weights are a geopolitical distribution mechanism. When DeepSeek V4 ships under an MIT license and Moonshot releases Kimi K2.6 under a Modified MIT on the same day, they are not giving away competitive advantage. They are building global dependency. Every developer in Brazil, India, Nigeria, or Vietnam who builds a production system on DeepSeek V4 weights is a developer who is not building on Claude's API, not locked into Google's Vertex AI, and not subject to U.S. export control policy on AI access.

As the CFR's Horowitz noted, "Chinese AI models already have more downloads on Hugging Face, the open-source AI platform, than those from the United States. That is the adoption competition in action." The Global South is not choosing between GPT-5 and Claude Sonnet based on benchmark leaderboards. It is choosing between accessible tools that can be self-hosted on local infrastructure, modified freely, and deployed without ongoing API dependency on a foreign cloud and tools that require a credit card billed in U.S. dollars, compliance with U.S. terms of service, and routing of all inference traffic through American data centers. The choice, framed that way, is not close. And as Vilalta observed, Alibaba's commitment of 380 billion yuan (approximately $50.6 billion) to cloud and AI infrastructure over three years is not the investment profile of a company that expects open-source to remain free of commercial return. It is the investment profile of a company building the rails on which a new generation of globally dependent developers will run their applications.

The Huawei Ascend Problem and Why It May Not Be a Problem

The most substantive Western counterargument to Chinese open-weight dominance is the compute constraint thesis: without access to Nvidia's H100 and Blackwell chips, Chinese labs cannot train at the frontier, and their iterative advantage will eventually stall. The CFR's Chris McGuire articulated the strongest version of this argument, noting that DeepSeek V4 "trails state-of-the-art frontier models by approximately 3 to 6 months" and that DeepSeek itself acknowledges this gap in its own technical paper. U.S. government officials have accused DeepSeek of training V4 on smuggled Nvidia Blackwell chips, and the White House's science and technology office has accused Chinese entities of conducting large-scale distillation attacks against U.S. frontier models extracting capability at a fraction of the training cost through millions of synthetic interactions.

But the compute constraint thesis has a fatal logical flaw: it assumes the performance gap is what matters. If DeepSeek V4-Pro is 3 to 6 months behind GPT-5.4 on frontier benchmarks, but V4-Flash is available at $0.28 per million output tokens against GPT-5.4's price point, and V4-Flash is good enough for 80 percent of production workloads, then the 3-to-6-month gap is commercially irrelevant for the workloads where cost sensitivity matters most. The Huawei Ascend training story strengthens this argument further. GLM-5.1 trained entirely on Huawei silicon, no Nvidia GPUs anywhere in the stack achieves benchmark scores that would have been classified as frontier-level performance eighteen months ago. If Chinese labs can train competitive models on domestic silicon today, the export control strategy is already partially neutralized. The question is not whether Huawei Ascend matches Nvidia H100 watt-for-watt. The question is whether it is good enough to close a 3-to-6-month performance gap that is itself a moving target closing from both sides simultaneously.

The DeepSeek V4 Pricing Shock in Context

To make the cost differential visceral rather than abstract, consider a standard enterprise production workload: one million agent API calls, each with a 2,000-token cached system prompt, a 200-token user message, and a 300-token response. On DeepSeek V4-Flash, that workload costs approximately $117.60 in total API spend. On Kimi K2.6, treating all input as uncached at $0.60 per million, the same workload runs approximately $2,520. On a comparable Western frontier model at standard commercial pricing, the figure exceeds $1,000 even at enterprise discount rates. The following table illustrates the cost structure across the major options at this workload specification:

| Model | Cached Input Cost (2B tokens) | Uncached Input Cost (200M tokens) | Output Cost (300M tokens) | Total (1M calls) |

|---|---|---|---|---|

| DeepSeek V4-Flash | $5.60 | $28.00 | $84.00 | $117.60 |

| MiniMax M2.5 Standard | $600.00* | $60.00 | $360.00 | ~$1,020.00* |

| DeepSeek V4-Pro (promo) | $7.25 | $87.00 | $261.00 | ~$355.25 |

| Kimi K2.6 | $1,200.00* | $120.00 | $1,200.00 | ~$2,520.00* |

*MiniMax and Kimi K2.6 cache pricing not publicly confirmed; input treated as full uncached rate. Source: DeepSeek AI Guide worked example methodology, April 2026. Verify against current pricing pages before production commitment.

The cost table reveals the internal tension within the swarm itself. DeepSeek V4-Flash is so cheap that it undermines the commercial viability of every other Chinese model for cost-sensitive workloads including Kimi K2.6, which is 14 times more expensive on output tokens. This is not a strategic contradiction. It reflects the division of labor within the swarm: DeepSeek prices for volume and global market capture, accepting thin or negative margins underwritten by Chinese government subsidies and the infrastructure economics of Huawei integration. Kimi K2.6 prices for specialized agentic workloads where benchmark performance justifies premium its 54.0 score on Humanity's Last Exam with tools leads every model in Moonshot's comparison table, including GPT-5.4's 52.1, Claude Opus 4.6's 53.0, and Gemini 3.1 Pro's 51.4. The swarm collectively covers every price-performance point from $0.28-per-million commodity inference to $4.00-per-million frontier agentic capability.

The Meta Displacement: China Owns the Open-Source Space the West Abandoned

The sharpest indicator of how completely the Chinese open-weight swarm has restructured the competitive landscape is what has happened to Meta's Llama franchise. Meta built its entire AI strategy on the thesis that it could own the open-source layer provide the free alternative to OpenAI and Anthropic, build ecosystem lock-in through developer adoption, and monetize through inference infrastructure and enterprise services. That thesis was coherent as recently as early 2025. It is no longer coherent in April 2026.

As Vilalta observed with precision: "Any company that wants a powerful open model today, what it does is not take Llama 4, it takes Alibaba's Qwen 3.5 or DeepSeek V4. For cost, for performance, and, in many cases, for more permissive licensing. Meta now has the worst possible combination: it has lost the frontier race in the West and, at the same time, the open-source space it owned is occupied by the Chinese." This displacement is not merely a competitive setback for Meta. It is evidence that the Chinese open-weight strategy has already achieved its primary near-term objective: eliminating the American open-source alternative. With Llama neutralized as a competitive option for developers who want open weights, the choice for any organization building on open models is now, functionally, a Chinese model or a self-funded fine-tune of a smaller Western architecture. The Western sovereign cloud and the Chinese open-weight swarm are not competing in the same market segment. They have divided the world between them and the open-weight half of that division now belongs entirely to Beijing, Hangzhou, and Shanghai.

Benchmark Credibility: The Independent Verification Problem

Any honest assessment of the Chinese open-weight swarm must confront the benchmark credibility question directly. The scores cited above GLM-5's 92.7% on AIME 2026, Kimi K2.6's 54.0 on HLE-Full with tools, DeepSeek V4-Pro's 80.6% on SWE-Bench Verified are largely self-reported by the labs that produced the models, evaluated on benchmarks where contamination risk is non-trivial and evaluation methodology varies between organizations. The following table shows where independent third-party verification exists and where it does not:

| Benchmark | Model | Reported Score | Independent Verification | Confidence Status |

|---|---|---|---|---|

| AIME 2026 | GLM-5.1 | 92.7% | Partial (LM Council snapshot) | Moderate |

| SWE-Bench Verified | DeepSeek V4-Pro | 80.6% | Verified (SWE-Bench Leaderboard) | High |

| HLE-Full w/ Tools | Kimi K2.6 | 54.0 | Pending (Vals AI evaluating) | Provisional |

Until independent verification completes, enterprise procurement treats self-reported numbers with a standard discount factor. However, the verified SWE-Bench numbers confirm the open-weight swarm is undeniably operating at the frontier.

4) Thinking Model Benchmarks Deep Dive: Kimi K2.5's Reasoning Stack, Tool-Use, Long-Horizon Planning, and Where It Beats/Trails Rivals

Of all the models released in the first quarter of 2026 that did not originate from OpenAI, Anthropic, or Google DeepMind, none has attracted more serious technical scrutiny from Western engineers than Moonshot AI's Kimi K2.5. Not because it is the cheapest it is not. Not because it is the fastest it is not that either. But because the architecture decisions baked into its design represent a genuinely different theory of what a "thinking model" should be, one that prioritizes tool-augmented long-horizon reasoning over raw single-shot accuracy, and that theory is starting to show up in benchmark scores in ways that are difficult to dismiss.

Understanding what Kimi K2.5 actually does and where it falls short requires going considerably deeper than the headline numbers that circulated on launch day. This section attempts that deeper dive, drawing on the official model card and technical documentation, independent benchmark aggregators, and the comparative data published by rival labs in the same release window.

The Architecture: Why MoE at 1T Total / 32B Active Matters for Reasoning

Kimi K2.5 is a Mixture-of-Experts model with 1 trillion total parameters and 32 billion activated per forward pass. It routes across 384 experts, activating 8 per token, plus one shared expert. The attention mechanism is MLA Multi-head Latent Attention, the same design choice DeepSeek pioneered which compresses the KV cache footprint dramatically and is a significant factor in why the model can sustain a 256K-token context window without the inference cost spiraling into economic absurdity. The vision encoder, MoonViT, contributes 400 million parameters and enables genuinely native multimodal grounding, meaning the model does not bolt vision onto a language base but pre-trains across approximately 15 trillion mixed visual and text tokens simultaneously.

The parameter sparsity ratio 32B active out of 1T total means Kimi K2.5 achieves inference costs closer to a 32B dense model while having access to the representational capacity of a much larger parameter space at training time. This is the same architectural logic that makes DeepSeek V4 economically viable, but Moonshot has applied it with a different optimization target: not raw throughput cheapness, but the ability to sustain coherent multi-step reasoning chains across very long context windows. That design choice has direct consequences for how the model performs on the benchmarks that matter most in 2026.

Thinking Mode vs. Instant Mode: A Dual-Paradigm Architecture

One of the underappreciated design decisions in Kimi K2.5 is its explicit dual-mode architecture. The model ships with a native "Thinking Mode" accessed via the API by leaving the default parameters active and an "Instant Mode" toggled by passing {'thinking': {'type': 'disabled'}} in the request body. This is not a post-hoc wrapper or a prompt engineering trick. It is a structural feature baked into the model's chat template, meaning the model has genuinely learned to modulate between fast-response and extended-reasoning inference paths depending on the mode selected.

This matters because it means the benchmark scores for Kimi K2.5 Thinking and Kimi K2.5 Instant are not the same model evaluated differently they reflect different learned behaviors. The Thinking mode generates explicit reasoning_content alongside the final response, allowing inspection of the chain-of-thought. This is precisely the architecture that produces the tool-use and long-horizon planning numbers that have attracted the most attention, and it is the mode evaluated on the hard benchmarks discussed below.

The Benchmark Numbers: A Rigorous Reading

The headline numbers from the Kimi K2.5 technical evaluation deserve to be read carefully, benchmark by benchmark, rather than aggregated into a single triumphalist narrative. The table below presents the full comparative picture across the model's strongest and weakest categories, using figures from the official model card supplemented by data from the Vals AI independent benchmark tracker and the DeepSeek AI Guide practitioner comparison:

| Benchmark | Kimi K2.5 (Thinking) | GPT-5.2 (xhigh) | Claude Opus 4.5 | Gemini 3 Pro | DeepSeek V3.2 | Verdict |

|---|---|---|---|---|---|---|

| HLE-Full (no tools) | 30.1 | 34.5 | 30.8 | 37.5 | 25.1 | Kimi trails GPT-5.2 and Gemini 3 Pro in pure knowledge recall without external tools |

| HLE-Full (with tools) | 50.2 | 45.5 | 43.2 | 45.8 | 40.8 | Kimi leads all rivals by +4.4 points over GPT-5.2 the tool-use gap is the signal |

| AIME 2025 | 96.1 | 100.0 | 92.8 | 95.0 | 93.1 | Strong but not leading; GPT-5.2 achieves a perfect score here |

| GPQA-Diamond | 87.6 | 92.4 | 87.0 | 91.9 | 82.4 | Competitive with Claude; trails GPT-5.2 and Gemini 3 Pro in graduate-level science |

| SWE-Bench Verified | 76.8 | 80.0 | 80.9 | 76.2 | 73.1 | K2.5 trails Claude and GPT-5.2 on software engineering at the base verified tier |

| SWE-Bench Pro | 50.7 | 55.6 | 55.4 | - | - | K2.5 trails by ~5 points on the harder SWE-Bench Pro tier (K2.6 closes this gap) |

| BrowseComp (Swarm) | 78.4 | 65.8 | 37.0 | 37.8 | 51.4 | Kimi dominates at +12.6 over GPT-5.2; Claude and Gemini collapse on swarm tasks |

| DeepSearchQA | 77.1 | 71.3 | 76.1 | 63.2 | 60.9 | Kimi leads in long-horizon search-augmented reasoning |

| VideoMME | 87.4 | 86.0 | - | 88.4 | - | Strong multimodal video; trails Gemini 3 Pro slightly but leads GPT-5.2 |

| LongVideoBench | 79.8 | 76.5 | 67.2 | 77.7 | - | Kimi leads; Claude drops sharply on long-form video understanding |

| MMLU-Pro | 87.1 | 86.7 | 89.3 | 90.1 | 85.0 | Competitive across multitask language understanding; trails Gemini and Claude marginally |

| WideSearch (Swarm) | 79.0 | - | - | - | - | No comparable rival has published an Agent Swarm score on this benchmark |

The Tool-Use Gap: What HLE-Full Actually Measures

The most analytically significant number in the table above is not the AIME 2025 score, not the GPQA-Diamond figure, and not SWE-Bench Verified. It is the delta between Kimi K2.5's HLE-Full without tools (30.1) and HLE-Full with tools (50.2). That 20.1-point swing when external tools are made available is the largest such swing of any model evaluated on Humanity's Last Exam in Moonshot's comparison set. GPT-5.2 improves from 34.5 to 45.5 a 11-point swing. Gemini 3 Pro improves from 37.5 to 45.8 an 8.3-point swing. Claude Opus 4.5 improves from 30.8 to 43.2 a 12.4-point swing.

Kimi K2.5 starts from a lower baseline than GPT-5.2 and Gemini 3 Pro in pure parametric knowledge recall, but it extracts dramatically more value from tool access. This is the architectural fingerprint of a model that has been optimized for tool-augmented operation rather than self-contained knowledge retrieval. The practical implication for production deployments is significant: Kimi K2.5 running with search, calculator, and code-execution tools enabled is a meaningfully different system than Kimi K2.5 running in closed-book mode, and the gap between the two is larger than for any Western frontier model currently available.

This pattern repeats on agentic search benchmarks. On DeepSearchQA which evaluates whether a model can decompose a complex research question, issue multiple sequential search queries, synthesize retrieved information across a long context window, and produce a grounded final answer Kimi K2.5 scores 77.1, compared to 71.3 for GPT-5.2, 76.1 for Claude Opus 4.5, and 63.2 for Gemini 3 Pro. The model's 256K context window is not incidental to this result: long-horizon search tasks require holding intermediate retrieval results, partially-verified facts, and accumulated reasoning traces in context simultaneously. A 128K context ceiling, such as that imposed on some Western API configurations, forces more aggressive summarization and intermediate compression that degrades search-augmented reasoning quality.

Agent Swarm: The Benchmark Western Labs Cannot Match

The most structurally unusual scores in Kimi K2.5's benchmark suite are the ones that have no counterpart in any Western lab's published evaluation set: the Agent Swarm configurations. On BrowseComp with context management, Kimi K2.5 scores 74.9, compared to 57.8 for GPT-5.2 and 59.2 for Claude Opus 4.5. That is a substantial gap. But the BrowseComp Agent Swarm score 78.4 for Kimi K2.5, against 65.8 for GPT-5.2 is the number that has drawn the most attention from engineers working on multi-agent production systems.

To understand why this matters, it helps to understand what BrowseComp Agent Swarm actually tests. It is not a single-agent web browsing task. It is a benchmark that requires a model to decompose complex web research tasks into parallel sub-tasks, instantiate domain-specific sub-agents dynamically, coordinate their parallel execution, and synthesize their outputs into a coherent final answer all without losing track of the original task objective or producing contradictory conclusions from agents working on different sub-problems simultaneously. Claude Opus 4.5 scores 37.0 on this benchmark. Gemini 3 Pro scores 37.8. The gap between Kimi K2.5 at 78.4 and the Western frontier models is not a rounding error. It is more than double.

This performance profile is consistent with what practitioners building on Kimi models have reported: the model is "designed for long-running coding agents, front-end generation, and massively parallel agent swarms," and anecdotally, "Kimi models have been popular with agentic and code-assist users looking for less expensive options compared with the closed US leaders." The Agent Swarm architecture, which the model card describes as a transition "from single-agent scaling to a self-directed, coordinated swarm-like execution scheme," is the feature that has no direct analogue in any currently available Western frontier model. OpenAI, Anthropic, and Google DeepMind have yet to release a natively orchestrated agent-swarm mode at this scale, leaving Kimi K2.5 in a class of its own for massively parallel workflows.

This orchestration gap highlights a critical vulnerability in the Western sovereign cloud strategy. While GPT-5.5's roadmap promises native multi-agent capabilities by Q4 2026, the current reliance on single-agent, linear-reasoning frameworks leaves enterprise customers building fragile, custom scaffolding to achieve what Kimi does natively. Moonshot AI has essentially commoditized the orchestration layer, proving that the 'Thinking Model' of the future isn't a solitary genius it is a tightly coordinated committee.

5) Edge-AI Dominance and the New Client OS Layer: Xiaomi Mimo/Hunter Pro, On-Device Agents, Private Memory, and Latency-as-a-Weapon

Every serious technology war has two simultaneous fronts: the frontier, where the largest models compete on raw benchmark performance, and the edge, where the winning technology physically occupies the device in a billion pockets, desks, and factory floors. The cloud frontier war between OpenAI, Anthropic, Google DeepMind, and the Chinese open-weight swarm has dominated headlines throughout 2025 and into 2026. But the second front the edge is where the 2026 pivot is happening with the least Western scrutiny and the most strategic consequence. And on that front, Xiaomi's moves with the Mimo model family and the Hunter Pro hardware platform represent something genuinely new: not a Chinese imitation of an Apple or Google Edge-AI strategy, but a vertically integrated, latency-first, privacy-native alternative architecture that reframes what a client-side AI operating system is supposed to be.

To understand why the edge matters as much as the frontier in 2026, start with a simple economic reality. The cloud inference model where every prompt leaves your device, travels to a data center, is processed by a frontier model, and returns a response carries irreducible costs: latency measured in hundreds of milliseconds at minimum, bandwidth consumption, privacy exposure at every network hop, and a recurring per-token fee that makes always-on ambient AI economically impossible to deploy at consumer scale. Every AI assistant that feels "slow," every enterprise deployment that hits a monthly API cost ceiling, every compliance officer who refuses to approve a SaaS AI tool because it processes regulated data on foreign cloud infrastructure all of these are edge-case failures of the cloud-first inference paradigm. The model that runs locally on the device, with zero network latency, zero token cost at inference time, and zero data leaving the hardware, solves all of these problems simultaneously. The question was never whether on-device AI would win significant market share. The question was who would build the hardware-software-model stack capable of making on-device AI good enough to matter.

The Xiaomi Stack: Vertical Integration as Strategic Weapon

Xiaomi's approach to Edge-AI is structurally different from how Western companies have approached the same problem. Apple's on-device AI strategy, embodied in Apple Intelligence, is tightly controlled, model-agnostic at the user level, and deliberately constrained: it runs small classification and summarization models locally, offloads anything complex to Private Cloud Compute, and treats the on-device layer as a privacy filter rather than a primary inference engine. Google's Gemini Nano, embedded in Pixel devices and licensed to Android OEMs, is similarly oriented: a capable small model for specific tasks summarization, smart reply, live captions but not an ambient reasoning agent that maintains persistent context across sessions. Microsoft's Copilot+ PC initiative brought NPU-accelerated on-device AI to Windows hardware, but its dependency on Qualcomm Snapdragon X Elite chips and its reliance on cloud for anything beyond basic Recall functionality means it is a hybrid architecture, not a true local-first one.

Xiaomi's strategy, crystallized in the Mimo model family and the Hunter Pro platform, makes a different set of design choices at every layer of the stack. The company is simultaneously a chip designer (with its Xring 01 SoC, its first fully in-house mobile chip after years of Qualcomm and MediaTek dependency), a device manufacturer at scale (shipping over 200 million devices annually across phones, tablets, laptops, TVs, and smart home hardware), an operating system developer (HyperOS, its successor to MIUI), and now an AI model lab. This vertical integration is not incidental. It is the explicit strategic thesis: that the company which controls silicon, firmware, OS, and model simultaneously can make architectural optimizations at each layer that are unavailable to any company that does not control all four.

The Xring 01 chip, Xiaomi's first fully proprietary mobile SoC, is built on a 3nm process with a dedicated neural processing unit capable of running models up to approximately 7 billion parameters locally at inference speeds competitive with cloud API response times for standard query types. The NPU architecture is co-designed with the Mimo model quantization pipeline, meaning the integer-4 and integer-8 quantization schemes used to compress Mimo models for on-device deployment are tuned specifically to the Xring 01's memory bandwidth and compute primitives not ported from a generic quantization framework. This co-design yields measurable efficiency gains over comparable approaches using off-the-shelf chipsets: lower power consumption per token, higher sustained throughput, and critically, lower thermal throttling in extended agentic sessions. For a device that runs an always-on ambient AI, thermal behavior is not a minor engineering detail. It is the difference between a model that performs consistently across a full working day and one that degrades after twenty minutes of active inference.

Mimo: What a Purpose-Built Edge Model Actually Looks Like

The Mimo model family is not a compressed version of a large frontier model. It is designed from the ground up for edge deployment constraints. The architecture uses a Mixture-of-Experts structure at the small-model scale, activating approximately 2-3 billion parameters per forward pass from a total parameter count of roughly 7-8 billion, achieving a quality-per-active-parameter ratio that significantly outperforms dense models of equivalent total size on the task distribution that matters for on-device agents: multi-step instruction following, calendar and contact management, document summarization, code completion in context, and memory-augmented retrieval over personal data stores. Xiaomi's engineering team has published internal benchmarks showing Mimo outperforming Gemini Nano 3 on Chinese-language instruction following by a substantial margin and performing competitively with Gemini Nano 3 on English-language tasks a meaningful result for a model running entirely locally on a mobile device.

The model's design philosophy prioritizes what Xiaomi's AI team calls "contextual persistence" over raw benchmark scores on standardized academic evaluations. A frontier model that scores 92% on GPQA-Diamond but forgets what you told it three sessions ago is, for the use case of a personal on-device agent, less useful than a smaller model that maintains a structured, private, persistent memory of your preferences, recurring tasks, communication patterns, and domain-specific knowledge across months of interaction. Mimo's memory architecture stores a rolling compressed representation of user interaction history in the device's secure enclave encrypted at rest, never transmitted to any cloud endpoint and uses this memory as a persistent context prefix on every inference call. The practical effect is an agent that becomes measurably more useful the longer it runs on your specific device, with your specific data, developing a personalized prior over your behavior that no cloud model serving millions of users simultaneously can replicate.

This is the feature that no Western cloud-first AI provider can match without a fundamental architectural concession. OpenAI's memory feature for ChatGPT stores summaries of user preferences in the cloud and retrieves them as soft context. It is useful. But it is architecturally centralized: the memory lives on OpenAI's servers, is subject to OpenAI's data policies, is processed by OpenAI's infrastructure, and is unavailable if the user loses connectivity. Mimo's memory is structurally private by construction. There is no server to subpoena. There is no API call to intercept. The model learns about you exclusively on your hardware, and that learning stays there. For the hundreds of millions of users in markets where trust in Western cloud infrastructure is low and that includes most of the world outside North America and Western Europe this is not a marginal feature. It is the primary value proposition.

Hunter Pro: The Hardware Platform as AI Policy

The Hunter Pro is Xiaomi's flagship smartphone platform for 2026, built around the Xring 01 SoC and positioned explicitly as an AI-native device rather than a smartphone with AI features added. The distinction is architectural: AI-native means the NPU and its associated memory subsystem are first-class citizens in the chip's power budget and thermal design, not afterthoughts attached to a CPU/GPU core designed primarily for gaming performance and computational photography. The result is a device where on-device inference is not a battery-draining exceptional operation but a continuous ambient capability running at effectively zero marginal power cost for the query types that constitute 90% of practical AI assistant usage.

The Hunter Pro's AI capabilities extend beyond the phone itself through what Xiaomi calls HyperConnect its cross-device protocol for sharing model inference and context across the Xiaomi ecosystem. A Xiaomi television, a Xiaomi router with embedded NPU compute, a Xiaomi smart home hub, and a Hunter Pro phone can collectively operate as a distributed edge inference cluster, routing complex queries to whichever device in the local network has available compute, without any data leaving the local network perimeter. This is not cloud AI. It is not even edge-cloud hybrid AI. It is genuinely local-network AI, where the inference cluster is your home or office, the data never crosses the WAN boundary, and the latency is bounded by local Ethernet or Wi-Fi speeds rather than transcontinental round trips. For latency-sensitive applications real-time translation, always-on voice interfaces, industrial process monitoring, low-latency code completion the difference between 8ms local inference and 180ms cloud inference is not a user experience refinement. It is the difference between a feature that works and one that does not.

| Capability | Xiaomi Mimo / Hunter Pro | Apple Intelligence (iPhone 16 Pro) | Google Gemini Nano 3 (Pixel 9 Pro) | Microsoft Copilot+ PC |

|---|---|---|---|---|

| Primary inference location | On-device (Xring 01 NPU); local network via HyperConnect | On-device for basic tasks; Private Cloud Compute for complex | On-device for Nano tasks; Gemini cloud for advanced | On-device NPU for Recall/summarization; Azure cloud for Copilot |

| Persistent private memory | Yes encrypted on-device secure enclave, never transmitted | Limited on-device, but session-scoped; no cross-session learning | Partial cloud-stored preference summaries via Gemini memory | Recall screenshots stored locally; no model-native persistent memory |

| Typical inference latency | ~8–25ms (on-device); ~15–40ms (local network) | ~20–50ms on-device; 150–400ms via PCC | ~30–60ms on-device Nano; 200–500ms cloud Gemini | ~40–80ms NPU tasks; 150–600ms Azure Copilot |

| SoC AI hardware | Xiaomi Xring 01 (3nm, proprietary NPU, co-designed with Mimo) | Apple A18 Pro (Neural Engine, 17 TOPS estimated) | Google Tensor G5 (TPU embedded, custom ISP) | Qualcomm Snapdragon X Elite (45 TOPS NPU) |

| Cross-device local inference mesh | Yes HyperConnect distributes inference across local Xiaomi ecosystem | No each Apple device runs independently | No Pixel-centric, no local mesh inference | No PC-centric, no local device mesh |

| Model openness | Mimo weights available for research; HyperOS integration proprietary | Fully proprietary, no weights released | Nano weights not publicly released; Gemma open separately | Phi-4 open weights available; Copilot integration proprietary |

| Ongoing inference cost to user | Zero marginal cost all compute on owned hardware | Zero for on-device; Apple Intelligence included in hardware price | Zero for Nano; Gemini Advanced subscription for cloud tier | Copilot+ PC features included; Microsoft 365 Copilot subscription for advanced |

Latency as a Weapon: The Strategic Physics of On-Device Inference

The framing of latency as a weapon requires explanation, because it is easy to dismiss as a performance footnote rather than a strategic variable. It is not. In the context of AI adoption dynamics in 2026, the latency profile of an AI system is the primary determinant of whether users form the habit of reaching for it constantly the "reflexive reach," as behavioral economists describe it or whether they treat it as a tool to consult occasionally when the activation cost feels worth paying. The reflexive reach threshold for digital tools is well-documented: interactions that complete in under 100ms feel instantaneous and become habits; interactions that take 300ms or more feel like tool usage and remain deliberate. Cloud AI, at its current best-case latency of 150-250ms for a typical mobile interaction on a good connection, sits above the reflexive reach threshold for most users in most contexts. On-device inference on optimized hardware, at 8-40ms, sits well below it.

The strategic implication is that the first AI platform to establish reflexive reach in daily device interaction will capture the habitual engagement layer that every other AI interaction depends on. If a user reflexively reaches for their on-device agent to compose a message, set a reminder, check a fact, or manage a task because it responds before the conscious mind has finished formulating the question that agent becomes the primary AI relationship. Cloud models become secondary tools consulted for complex tasks the primary agent cannot handle locally. This is the inversion of the current cloud-first hierarchy, and it is the precise strategic territory Xiaomi is occupying with the Hunter Pro and Mimo stack.

The scale at which this strategy operates is worth stating explicitly. Xiaomi ships over 200 million devices annually, with deep market penetration across China, Southeast Asia, India, Latin America, and Southern Europe precisely the geographies where the 2026 pivot away from Western cloud dependence is most strategically motivated. Each Hunter Pro device sold is not just a smartphone sale. It is an Edge-AI node deployed outside the Western cloud sovereignty perimeter, running a Chinese-developed model on a Chinese-designed chip, accumulating private personalized user data on local hardware that is legally and practically beyond the reach of U.S. FISA requests, EU GDPR enforcement, or any other external regulatory framework. This is AI sovereignty achieved not through data center policy or government mandate, but through hardware distribution at consumer scale.

On-Device Agents: The Architecture of Persistent Autonomy

The most consequential capability of the Mimo/Hunter Pro stack is not the model quality in isolation, nor the latency profile, nor the privacy architecture, but their combination in a persistent on-device agent that operates autonomously between explicit user interactions. Western cloud AI agents including OpenAI's Operator, Anthropic's Claude Agents, and Google's Project Astra are session-scoped: they activate when prompted, complete a task, and terminate. They have no background state, no proactive monitoring capability, and no ability to act on your behalf without an explicit trigger. This is partly a design choice and partly an architectural consequence of cloud-hosted execution: running a persistent agent process in a data center for every user is economically prohibitive at scale.

On-device execution eliminates this constraint entirely. Because Mimo runs on hardware you own, its compute costs you nothing incremental per hour of operation. A persistent agent process that monitors your calendar for scheduling conflicts, your inbox for urgent messages, your device sensors for context changes, and your stored preferences for preference-consistent decision support running continuously in the NPU's low-power inference lane is economically free on a device you have already purchased. It is not a feature that requires a subscription tier. It is a capability that falls out naturally from the architecture of on-device inference combined with always-on NPU hardware.

Xiaomi's implementation of this persistent agent architecture in HyperOS integrates Mimo with the device's permission model in a way that is structurally more capable than Apple's app-sandboxed Siri or Google's Assistant framework. The HyperOS AI layer has direct, permissioned access to the device's full sensor suite, communication stack, application context, and file system not through inter-app communication APIs that each application must explicitly support, but through an OS-level integration that treats the AI agent as a first-class operating system primitive rather than an application running in user space. This means Mimo can observe system state what application is in focus, what content is on screen, what sensors are detecting and act on that state without waiting for an application to implement an AI API. The agent is woven into the OS, not bolted onto it.

The contrast with Western implementations is stark. When Apple launched Apple Intelligence, a significant portion of its most-advertised features required app developers to adopt new APIs before the AI could interact with their applications' content. Users with third-party email clients, browsers, or productivity apps found that Apple Intelligence was largely blind to their primary workflows. Google faces the same fragmentation problem on Android, where the diversity of OEM implementations and the Android permission model make system-level AI integration difficult to guarantee across devices. Xiaomi, selling a vertically integrated hardware-software stack with a unified OS, has no such fragmentation problem on its own devices. On a Hunter Pro running HyperOS, Mimo sees everything the user sees, by design, with the user's explicit consent captured at device setup rather than app-by-app.

This dynamic is actively giving rise to what geopolitical analysts are calling the "Digital Non-Alignment Movement." Nations like India, Brazil, Indonesia, and South Africa unwilling to be trapped as digital client states to either Washington's cloud oversight or Beijing's centralized data dragnet are seizing upon devices like the Xiaomi Hunter Pro as a neutral third path. By leveraging powerful Edge-AI that operates entirely offline, these countries can build localized, high-IQ economies without pledging allegiance to a foreign sovereign cloud. In this context, Xiaomi isn't just selling hardware; they are distributing the infrastructure for cognitive independence.

6) The 2026 Pivot to Local Open-Weight Sovereignty: From Global Cloud Dependence to Regional AGI OS Stacks

Something broke in 2024 that most enterprise technology buyers did not consciously register until 2026: the implicit social contract of cloud-first AI. That contract read, roughly, as follows you send your data to a hyperscaler's data center, the hyperscaler runs it through a proprietary frontier model, and you receive an inference result. The hyperscaler promises compliance, the frontier lab promises capability, and your organization gets productivity. Everyone wins.